Well, I got myself a new Android phone couple of weeks back and I was hovering over the net to find any good apps which will help me on location while shoots or in front of workstation and here are few interesting ones I came across.

This one comes with a nifty film calculator which calculates how much amount of film is need for given time and film format. The depth of field calculator which does exactly what it says and awesome Film/Video Glossary which gives you instant offline access to definition of all the industry standard terminologies.

I feel this is must have app for anyone in film/video industry as the glossary feature invaluable at any time. Also it features online help from Kodak support personal and their online resources.

I feel this is must have app for anyone in film/video industry as the glossary feature invaluable at any time. Also it features online help from Kodak support personal and their online resources.

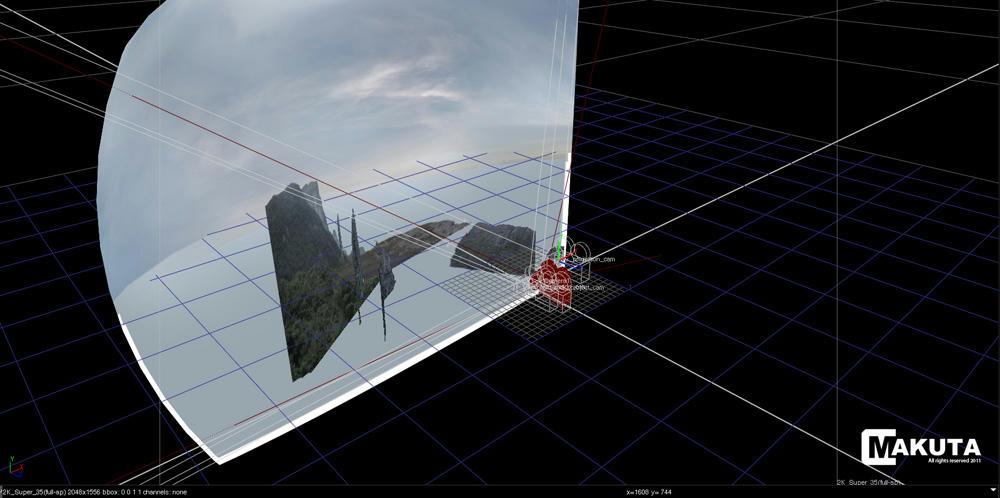

CamCalc is another free goody I came across, apart from Depth of Field, Focal Length Equivalents, Flash Calculation and other cinematography calculators I found Field of View and Miniature Calculator extremely useful for VFX folks as FOV calculator can be used while matching moving camera or aligning a camera in 3D scene. And Miniature Calculator will always comes in handy during on set VFX supervision to determine frame rates, distances and speed for a given scale.

The below video says it all

It would be very useful on location shoots for taking quick notes regarding measurements of props or set itself.

As the name suggests this is multi-function app with the following features, Ruler, Protractor, Flashlight, Compass, Gradienter, Wall picture, Vertical andTelemeasurement.

I think among the above features, Ruler, Vertical and Telemeasurement can be used in set. Vertical can be easily used to check whether items are vertical and showing the angle of deviation through help the phone camera. I am haven't personally used Telemesuremnt but according to the app decscription it uses simple 3 steps to measure the distance around any object and its height with help phone camera. This again can be used while on location but I doubt how accurate this could be but still time saver for those approx measurements.

Alarm Clock Plus

Last but not the least, this is the best alarm clock for Android out there so never be late for early morning call time for shoots ;)

I think among the above features, Ruler, Vertical and Telemeasurement can be used in set. Vertical can be easily used to check whether items are vertical and showing the angle of deviation through help the phone camera. I am haven't personally used Telemesuremnt but according to the app decscription it uses simple 3 steps to measure the distance around any object and its height with help phone camera. This again can be used while on location but I doubt how accurate this could be but still time saver for those approx measurements.

Alarm Clock Plus

Last but not the least, this is the best alarm clock for Android out there so never be late for early morning call time for shoots ;)

PS: I think there could be still lot of good film/vfx centric apps out there which I may haven't discovered yet so I will try to update this post if I find any or if you know any app please do drop a comment here.