Its ironic that before reading this

memo by Alvy Ray Smith I never wondered what really makes up a pixel. I always believed the computer stores color information as little squares but actually its only a mathematical representation of color information. Like Smith points out its mainly misconception due to the way computer application shows pixels when we magnify an image.

According to Smith,

'A pixel is a point sample. It exists only at a point. For a color picture, a pixel might actually contain three samples, one for each primary color contributing to the picture at the sampling point. We can still think of this as a point sample of a color. But we cannot think of a pixel as a square—or anything other than a point.'

He also states that an image is a continuous straight parallel array of point samples and by using an appropriate

image reconstruction filter we could create full colorful image what out of it. It is interesting to note that for example, while using any of these filters they would represent array of point in a form of rectangle which can be almost similar to a square. I believe this is the reason why when we zoom on image we see square pixels which represents the main color value of points sample present in that particular area. Smith's explanation on the same as follows

when you zoom in is this: Each point sample is being replicated MxM times, for magnification factor M. When you look at an image consisting of MxM pixels all of the same color, guess what you see: A square of that solid color! It is not an accurate picture of the pixel below. It is a bunch of pixels approximating what you would see if a reconstruction with a box filter were performed. To do a true zoom requires a resampling operation and is much slower than a video card can comfortably support in realtime today.

I think today it is not really important to understand this very basic issue since it is something our today's image manipulation applications manage quite efficiently underneath the user interface. But at the same time understanding this basic issues may help us to understand other important techniques such as 4:2:2 color sampling which I discussed in my

last post. The

Bayer filter used in 4:2:2 digital image sensors of digital cameras are similar to an image reconstructions filters such as

bilinear interpolation,

bicubic interpolation and

spline interpolation. They all build full color image from incomplete color samples (sample points) and this is process or algorithm is called

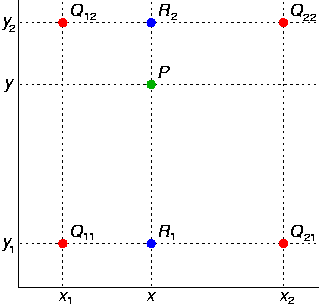

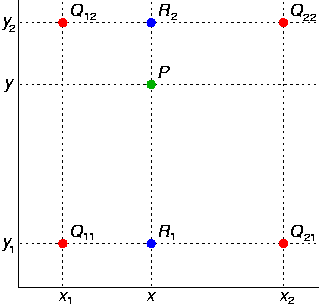

demosaicing.The below two images from wikipedia which explains this visually

The four red dots show the data points and the green dot is the point at which we want to interpolate.

Example of bilinear interpolation on the unit square with the z-values 0, 1, 1 and 0.5 as indicated. Interpolated values in between represented by colour.

Who is Alvy Ray Smith?

He was one of the cofounder of

Pixar and also

Executive Vice President from 1986-1991 and founder of Altamira which was acquired by Microsoft. He was co-awarded the Computer Graphics Achievement Award by SIGGRAPH in 1990 for "seminal contributions to computer paint systems," including the first full-color paint program, the first soft-edged fill program, and the HSV (aka HSB) color space model.

Most interestingly he gave Pixar its name which meant "to make pictures", an invented Spanish verb meaning. Also while at Pixar he played an important role in hiring John Lasseter who is now the CCO at Pixar and Walt Disney Animation Studios.

Finally check out the

Pixar founding documents hosted in his website.

Cheers,

Rahul